Michael Klemm - High Performance Parallel Runtimes

Here you can read online Michael Klemm - High Performance Parallel Runtimes full text of the book (entire story) in english for free. Download pdf and epub, get meaning, cover and reviews about this ebook. year: 2021, publisher: De Gruyter, genre: Computer. Description of the work, (preface) as well as reviews are available. Best literature library LitArk.com created for fans of good reading and offers a wide selection of genres:

Romance novel

Science fiction

Adventure

Detective

Science

History

Home and family

Prose

Art

Politics

Computer

Non-fiction

Religion

Business

Children

Humor

Choose a favorite category and find really read worthwhile books. Enjoy immersion in the world of imagination, feel the emotions of the characters or learn something new for yourself, make an fascinating discovery.

- Book:High Performance Parallel Runtimes

- Author:

- Publisher:De Gruyter

- Genre:

- Year:2021

- Rating:3 / 5

- Favourites:Add to favourites

- Your mark:

- 60

- 1

- 2

- 3

- 4

- 5

High Performance Parallel Runtimes: summary, description and annotation

We offer to read an annotation, description, summary or preface (depends on what the author of the book "High Performance Parallel Runtimes" wrote himself). If you haven't found the necessary information about the book — write in the comments, we will try to find it.

High Performance Parallel Runtimes — read online for free the complete book (whole text) full work

Below is the text of the book, divided by pages. System saving the place of the last page read, allows you to conveniently read the book "High Performance Parallel Runtimes" online for free, without having to search again every time where you left off. Put a bookmark, and you can go to the page where you finished reading at any time.

Font size:

Interval:

Bookmark:

De Gruyter Textbook

ISBN 9783110632682

e-ISBN (PDF) 9783110632729

e-ISBN (EPUB) 9783110632897

Bibliographic information published by the Deutsche Nationalbibliothek

The Deutsche Nationalbibliothek lists this publication in the Deutsche Nationalbibliografie; detailed bibliographic data are available on the Internet at http://dnb.dnb.de.

2021 Walter de Gruyter GmbH, Berlin/Boston

Mathematics Subject Classification 2010: 34-04, 35-04, 92C45, 92D25, 34C28, 37D45,

Todays world is a parallel world. Parallelism is ubiquitous. From the smallest devices, like processors that enable the Internet of Things, to the largest supercomputers, almost all devices now provide an execution environment with multiple processing elements. Thus, they require programmers to write parallel code that can exploit the parallelism available in the hardware. This ubiquity also means that it is necessary to implement runtime environments that support such parallel programs. In this book, we discuss the issues involved in building parallel runtime systems so that most (application) programmers dont have to worry about the complicated low-level details of parallel programming, but rather mainly concern themselves with the, unfortunately still complicated, higher-level issues!

We will cover the fundamental building blocks on which a parallel programming language relies, and discuss how they interact with modern machine architectures to help you understand how to provide high-performance implementations of these building blocks. Obviously, this also requires that you understand:

What the sensible performance measures for each construct are.

What the theoretical limits of performance are, given the properties of the underlying hardware.

How to measure the performance of both the hardware and the code.

How to use measurements of the hardware properties to design software that will perform well.

Throughout the book, we will show some interesting effects of the way modern processors are designed and the (performance) pitfalls that await programmers who have to reason about low-level machine details when they are implementing a high-performance parallel runtime system. You will see that there are some counterintuitive conclusions that will most certainly direct your thoughts about machine performance, but also implementation decisions, in the wrong direction.

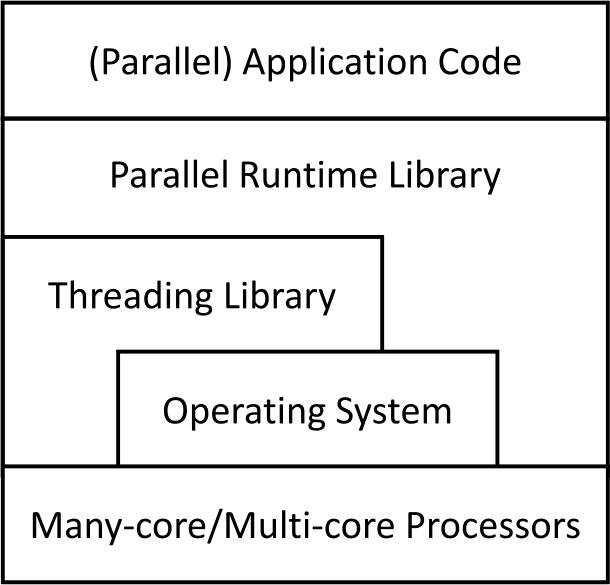

To better understand the structure of the book, please have a look at ]). The lowest level in the stack is the multi-core processor that executes the code of the parallel runtime system and the application. In many cases, both the threading library and the parallel runtime system will use functionality provided by the multi-core processor for improved efficiency.

Figure 1.1 Layers of a parallel runtime system.

The remainder of book generally approaches the topic from the top to the bottom. We start with the layer that is accessible to the (application) programmer: the parallel programming model. We discuss some of the design choices and how they affect the general structure of the software stack that implements a parallel programming model. Chapter briefly introduces some of the key concepts of parallel programming models for this book. Dont be disappointed that this is not going to be an in-depth introduction to parallel programming, but rather assumes that you have a basic familiarity with parallel programming already and only scratches the surfaces of this topic.

Chapter describes the basics of multi-core architectures. While this is, for sure, the lowest level (even below the software stack!), covering the machine-level details early on in the book seems useful, as some of the implementation choices and algorithms that we will present are clearly motivated by how modern processors work and how they behave when they are executing a parallel application.

In Chapter discusses some cross-cutting aspects that are usually needed in a parallel runtime system, like how to manage parallelism or how to do memory management.

Chapter cover the details! These chapters dive deep into the specific aspects and the implementation of key concepts like mutual exclusion, atomic operations, barriers, reductions, and task pools. All of these chapters focus on how the implemented algorithms interact with the machine and what effect they cause in a modern processor. This should provide a clear picture about what you, as a low-level ninja programmer to be, will have to understand to be able to extract parallel performance. Ideally, this will lead to a much better use of the expensive machine that executes your parallel runtime system (and the parallel application on top of it).

Before you can think about implementing a parallel runtime system, you have to think about what the parallel programming model should look like. This is, of course, if you start from scratch. If your task is to implement an existing programming model, your options are somewhat more limited, as now the programmer-facing parts of the model are defined, though you may still have some flexibility about what the internals of your implementations will look like.

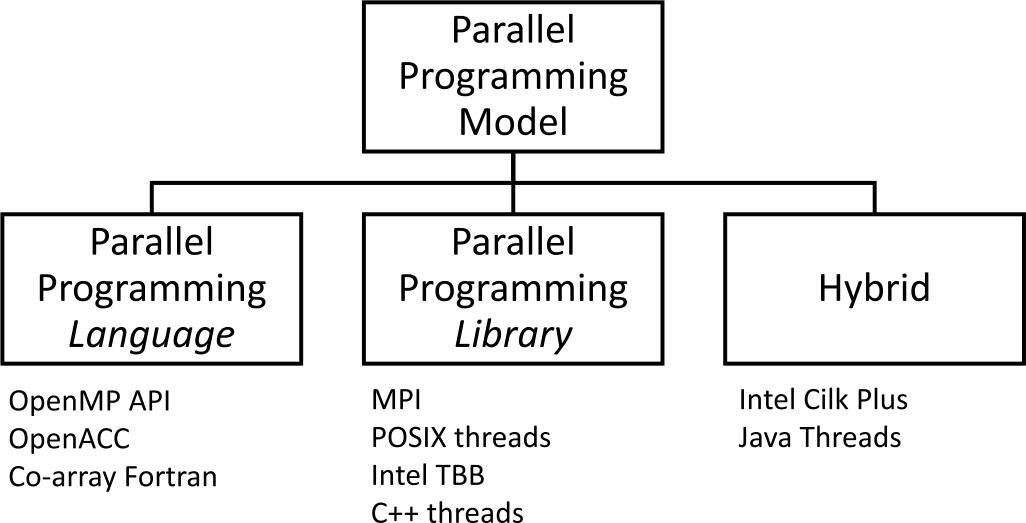

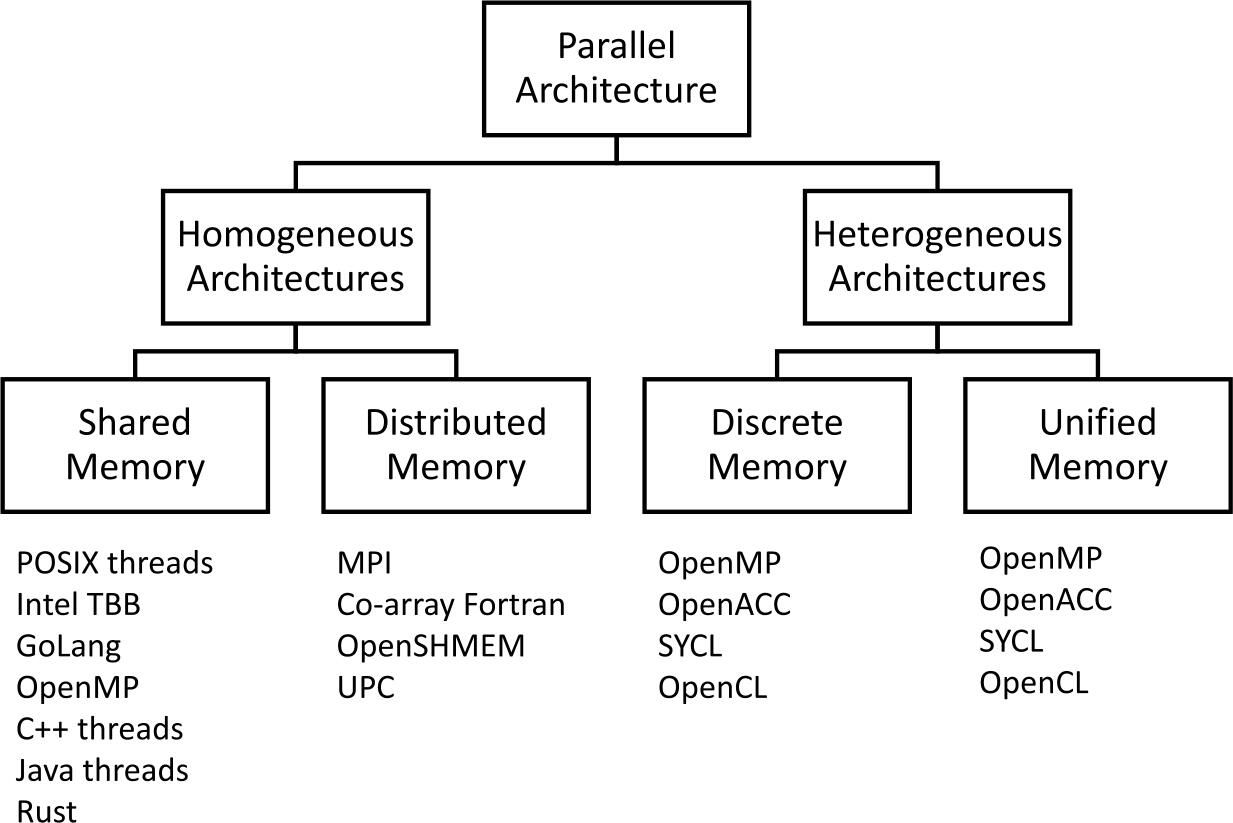

One of the main questions for the implementer of a parallel programming model is whether your programming model should be implemented as a library that provides an application programming interface (API) or as part of the programming language itself (or an extension of it). To make things even more complicated, you could also think of a hybrid model where parts of the model are expressed in the language while others are covered through API routines. shows a different categorization by the parallel architecture that is targeted by these programming models.

As you may imagine, each of these designs can bring some benefits, but at the same time these may come at pricethat is, the design may have drawbacks with respect to the alternative implementation of the parallel programming model. Here, we review the two main design choices and discuss their benefits and drawbacks.

Injecting parallelism by using a library seems like an obvious choice. Since most programming languages support libraries, you can potentially perform parallel programming from any programming language. One particularly good example of this is the POSIX thread library pthreads, which brings multi-threading to C and other languages on POSIX-compatible systems, e.g., the GNU/Linux* operating system. Another example is Intel* Threading Building Blocks [], which adds task-based parallelism to the C++ language.

Figure 1.2 Paradigms for parallel programming models.

Figure 1.3 Parallel programming models by categorized by memory architecture.

So, whats wrong with this idea? The main issue with using an API-only approach to parallelism is that the compiler is not normally aware of the special meaning of the calls to the library that are creating parallelism, and therefore has to treat the API routines as black boxes whose content is hidden. Historically, this issue was exposed because the C language did not define a memory model (see Section shows how such compiler assumptions can destroy the users intent. Although modern versions of the languages provide tools to alleviate the issue, you need to be aware of these issues and use the mitigations in your code.

Font size:

Interval:

Bookmark:

Similar books «High Performance Parallel Runtimes»

Look at similar books to High Performance Parallel Runtimes. We have selected literature similar in name and meaning in the hope of providing readers with more options to find new, interesting, not yet read works.

Discussion, reviews of the book High Performance Parallel Runtimes and just readers' own opinions. Leave your comments, write what you think about the work, its meaning or the main characters. Specify what exactly you liked and what you didn't like, and why you think so.